Capturing the perspectives of children and young people (CYP) is key to understanding how your work is experienced, the impact it has, and where there may be opportunities to improve. However, evaluating with children and young people brings specific challenges and considerations.

Through our work supporting Impact & Insight Toolkit (Toolkit) participants, we regularly hear questions about how to approach evaluation with CYP in ways that feel engaging, accessible, and useful. The suggestions in this blogpost are inspired by those conversations and from what we have observed while working with such organisations.

These suggestions are not intended as formal guidance, but we hope they offer a practical starting point. We encourage you to consider these ideas alongside further reading and research when designing your own evaluations with CYP.

Understand what you are looking for

As with any evaluation, start by being clear about the feedback you want to collect. Are you aiming to understand how your programme supports creativity and learning? Or do you want to understand how inclusive your activities feel to participants? Focussing your questions on the aspects that matter most ensures your surveys stay relevant and engaging.

Being clear on your primary goal will also guide which questions you ask and how you structure your evaluation. This is particularly useful when you want to:

- Understand the impact of your work on the people who experience it

- Tell a clear and compelling story about your programme

- Collect data that is actionable and aligned with your goals

For more on this, see our blogpost, “Why Working Backwards Works”.

It is also helpful to distinguish between question types. Outcome-focussed questions measure the impact of your programme (e.g. creativity, learning, inclusion) while experience-focussed questions capture how participants feel about taking part. Separating these ensures your evaluation covers all essential aspects of participant feedback.

When working with children and young people under 16, parental or guardian consent is usually recommended. Always communicate clearly with both guardians and participants about what the evaluation is for and how their responses will be used. Being transparent about why you are collecting feedback can help participants respond honestly and thoughtfully.

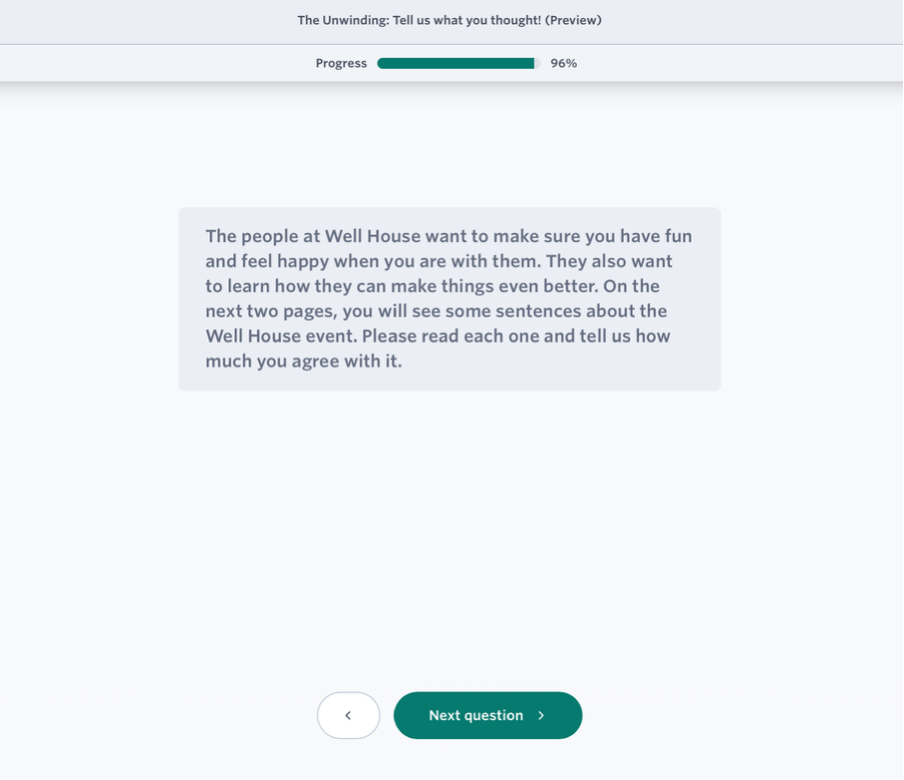

Practical tip: Customise an introductory message at the beginning of your survey, add pre-written messages from the Question Bank, or write your own, to ensure respondents understand clearly why you are asking these questions.

Keep it short and relevant

When you design surveys for children and young people, keep them brief and focussed. There is a statistically significant trend between survey length and the proportion of survey skipped.

Practical tip: Our recommendation is that you should have as short a survey as possible, with the most important questions positioned at the start of the survey.¹

Typically, younger respondents have varying attention spans, and long surveys can cause them to disengage. A concise, targeted set of questions helps participants stay engaged and provide feedback that better reflects their experience.

Consider age group and abilities

Design your survey with your audience’s age and abilities in mind. Complex or difficult-to-understand questions can create unintended barriers, and you risk missing valuable insights.

For example, younger children are likely to respond best to simple, direct language, while older children and young adults may engage more with thought-provoking questions. Consider creating different versions of your survey for different age groups to ensure every respondent can participate fully.

Counting What Counts has recently published a collection of new survey questions, labelled as Linguistically Easy Read (LER). These use more accessible language while still enabling you to collect comparable data on the same outcomes. While these questions haven’t been directly tested with children and young people, they align with Easy Read standards and were informed by expert transcription from A2i and cognitive testing by Shift Insight.

Practical tip: Use clear, straightforward language to prevent confusion and misinterpretation. Including LER questions in your Culture Counts surveys helps remove barriers and enables more respondents to share their experiences.

For a detailed account of the LER question development, see the full Accessible Survey Questions Project report.

Use a proxy survey

Sometimes, using a proxy survey can help you capture feedback from younger participants who may find it difficult to complete a survey on their own. In this approach, an adult (such as a parent, guardian, or facilitator) completes the survey on behalf of the young respondent.

The key is to design questions that allow the adult to accurately reflect the child’s thoughts and experiences. Proxy surveys can be particularly useful for collecting richer, more detailed feedback from participants who might struggle to articulate their responses directly.

Practical tip: Use the ‘Interview’ delivery method to conduct proxy surveys. This allows you to collect multiple responses per device and makes the feedback process more conversational and less intimidating for young participants.

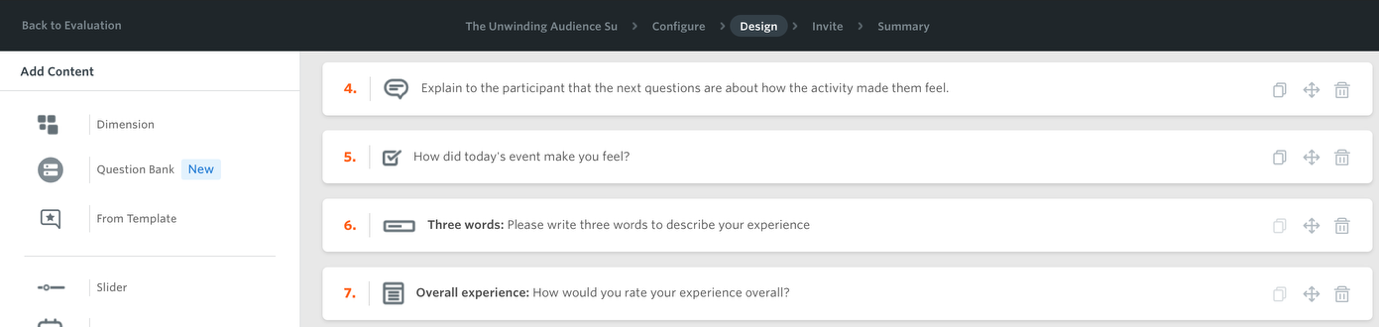

You can also use the Message content type within the survey to guide facilitators as they go. For example, add short prompts such as: “Explain to the participant that the next question is about how the activity made them feel.” This can help facilitators introduce questions clearly and consistently.

Make it engaging and fun

For many children, completing surveys might feel like schoolwork, which may reduce enthusiastic engagement. Exploring creative and interactive approaches can encourage participation.

The importance of play in learning activities has long been established within education. Framing your evaluation as a game can motivate children who might otherwise be reluctant to share their views. Physical activity during games can boost attention and focus, helping participants provide more thoughtful feedback.

One organisation participating in the Toolkit project used a ball game in which children threw balls into colour-coded buckets corresponding to different answer options. Another variation of this could include a ’step to answer’ game in which large cards or mats are laid out on the floor, each representing a survey answer. Children move to the card that reflects their response to a question. Afterwards, you or a facilitator can record their choices and import the data into Culture Counts.

Again, you can use the Message content type to support the facilitator running the activity. For example, include prompts such as: “Tell participants the next question is about how confident they felt during the workshop”. Clear prompts help ensure questions are introduced in a consistent way.

Practical tip: Use the ‘Data Import’ feature to upload externally collected data into Culture Counts. Feedback gathered through creative or alternative methods can be transcribed into a data template and uploaded directly into your evaluation. For step-by-step written and video guidance, see: Importing External Data.

For a more creative way of gathering feedback, ask children to draw or visually represent how a piece of work made them feel. Once the activity is complete, you can group responses into a handful of broad categories (for example:excited, curious, proud, challenged) when importing the data into Culture Counts. Note that this categorisation is guided by the person interpreting the responses.

These creative approaches feel less intimidating and more engaging than traditional surveys. They are especially useful for children who struggle to articulate their thoughts verbally or for non-English speakers.

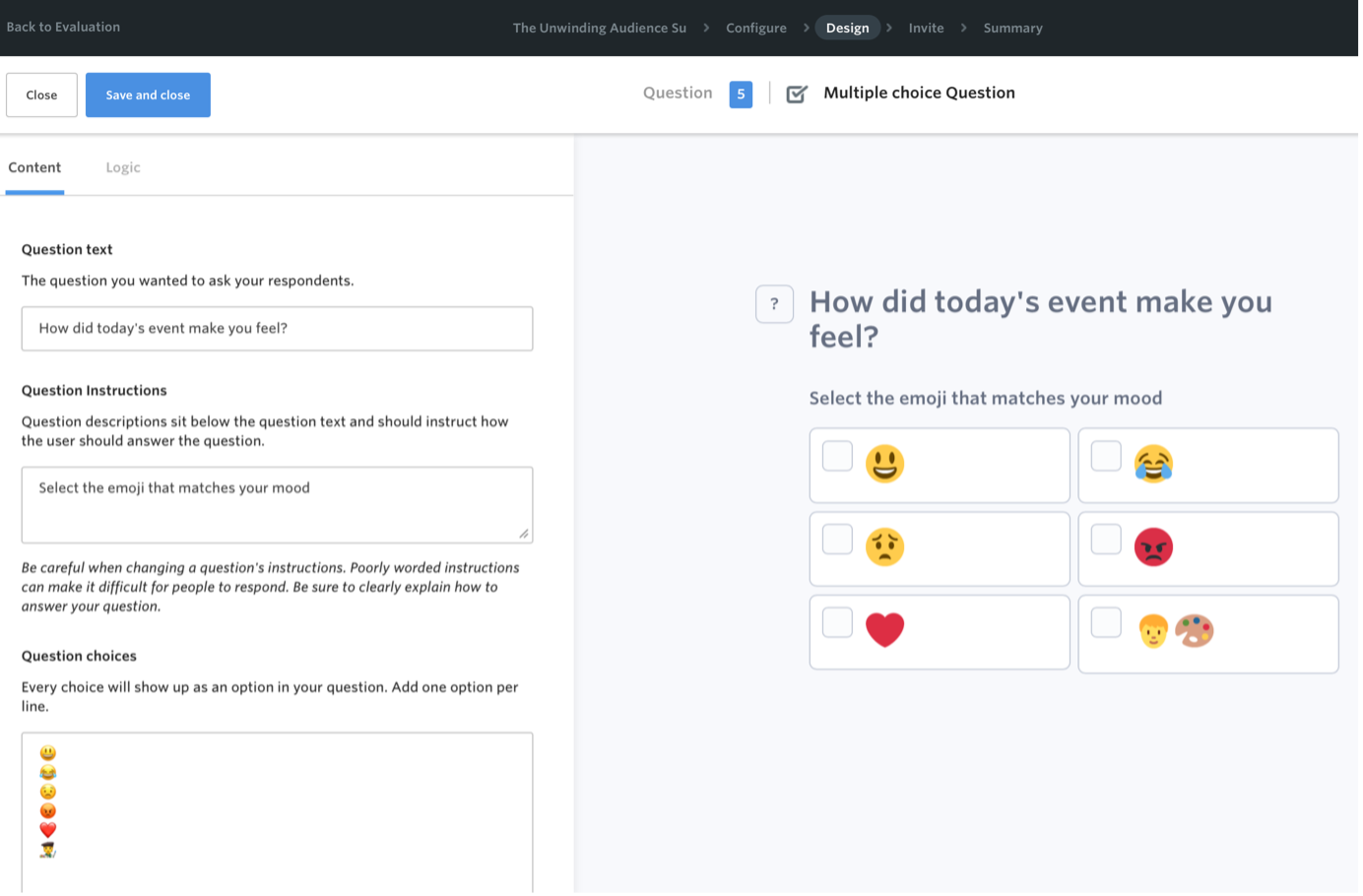

Practical tip: Culture Counts enables the use of emojis within questions. This visual element can be an effective way of connecting with younger respondents; questions feel more friendly and relatable, while also aiding them in understanding the sentiment behind the question and the options available.

Closing thoughts

By being clear on what you want to learn, keeping surveys concise, and incorporating creative approaches, you gather actionable insights that genuinely reflect children and young people’s experiences. This approach helps you tell a clear story about the impact of your work and make data-driven decisions that put their needs and experiences at the centre.

These suggestions are offered as practical guidance based on what we have learned through supporting Toolkit participants to evaluate work with children and young people. They are not a substitute for specialist research expertise, so we encourage you to use them alongside further reading and research when shaping your own evaluations. We have included some specialist resources that can help you get started below.

Further reading:

Evaluation with children and youth, BetterEvaluation: https://www.betterevaluation.org/methods-approaches/themes/evaluation-children-youth

Outcome and Experience Measures, Child Outcomes Research Consortium: https://www.corc.uk.net/outcome-measures-guidance/

References:

¹ Why Are Respondents Not Completing Your Survey?

Featured image credit: Count Chris on Unsplash