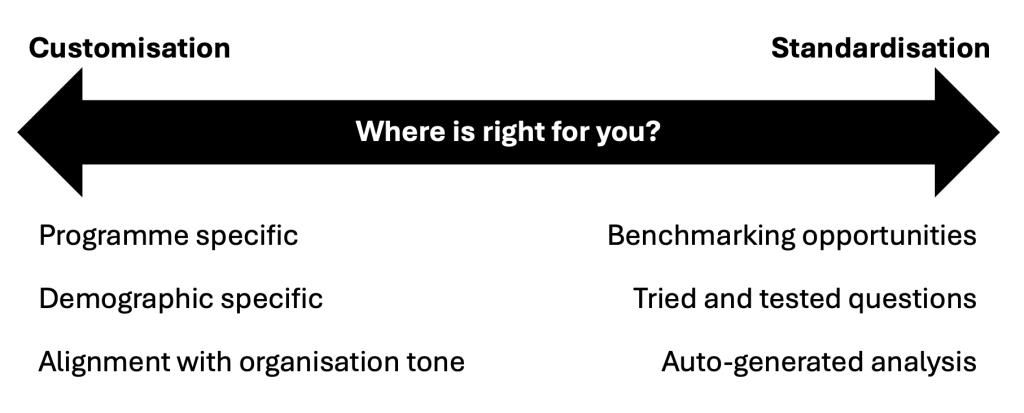

Does standardisation or customisation work best when evaluating your work? Which approach you take will depend on your aims for the evaluation. In this blogpost, we consider the pros and cons of each.

Introduction

When designing surveys, there are three main approaches you can take, and importantly, they’re not mutually exclusive. In most cases, organisations do not need to choose just one route, and a blended approach is often the most effective way forward.

You can:

- Write custom questions for everything, across the board

- Write custom questions that work for your organisation and repeat them across your surveys

- Use standardised, inbuilt questions

In practice, most organisations benefit from a blend of all three. The key is understanding what each approach offers and choosing the right balance for your goals.

Standard Toolkit approach

The Impact & Insight Toolkit primarily uses option 3, drawing on both Dimensions and Question Bank (QB) standardised questions. This is the default approach because it offers several key advantages:

- Confidence in using tested and well-considered questions

- Built-in analysis and reporting options

- Strong benchmarking opportunities using quantitative data

- Ability to compare results across surveys

- Seamless integration with our AI Thematic Analysis tool

- Alignment with external frameworks and requirements (e.g. Illuminate)

For many organisations, these benefits save time, reduce uncertainty, and make evaluation findings easier to act on.

However, as with all approaches, there are some trade-offs.

Considering the alternatives

Custom questions offer greater flexibility and precision. You can:

- Use language that aligns perfectly with your organisation’s brand

- Reflect a specific demographic’s or programme’s needs

- Reduce ambiguity through identifying specifics

This can be particularly valuable when you need to capture something unique about your work, or when you need to ensure the survey resonates with your intended respondents.

If you use a consistent custom question across your surveys, but change the subject every time, you maintain clarity. However, this approach loses the ease of inbuilt comparison and benchmarking that standardised questions provide. In other words, greater flexibility can sometimes mean giving up some efficiency.

Finding the right balance

Ultimately, this is all about balance. Think of it as a traditional scale:

At one end: Standardisation

At the other: Customisation

The right position depends on what you need your evaluation to do. Where is right for you?

To decide where you should sit on that scale, consider your aims for the evaluation.

If you don’t intend on comparing your data with other datasets (either your own or public-facing) or using it for more formal reporting, maybe fully-customised is the right route for you.

However, if you do want to use it for this, we would recommend moving a little more towards the standardised end of the scale.

A blended approach

Does that mean we think that you shouldn’t have any custom questions in your evaluation? Absolutely not!

A typical balanced survey might include:

- 4 standardised dimension questions

- 3 standardised question bank questions

- 2 custom questions that are used consistently across all surveys

- 1 custom question that is unique to this specific survey

The ‘right’ breakdown will look different for everyone.

If you need support in finding the right balance for your organisation, get in touch!

Illustration by DA VECTOR on Unsplash